Geek Stuff category archive

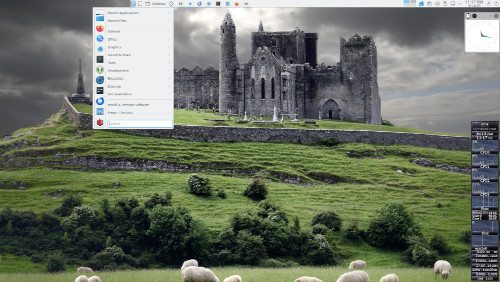

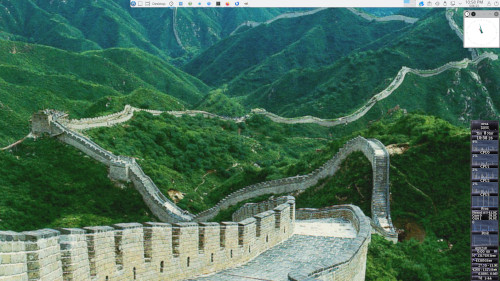

The Great Wallpaper of China 0

Mageia v. 9 with the Plasma Desktop. Xclock is in the upper right; GKrellM (my favorite system monitor), in the lower right. The wallpaper is from my collection.

Artificial? Yes. Intelligent? Not So Much. 0

Mendacious? Well, they’re just bots. They do what they’re told.

From El Reg:

What they found is that AI models will often lie in order to achieve the goals set for them.

Artificial? Yes. Intelligent? Not So Much. 0

The dialog: The daughter of a London bus driver?

The closed caption: The daughter of Alinda Masdrayva?

The stupid: Mind-boggling.

DOGE Bull in the China Shop 0

El Reg reports that DOGE and the Trump maladministration are gutting the United States’s cyber security efforts. A snippet:

You might think, if you’re outside the US, who cares? Unfortunately, whether you like it or not, the US has long taken the lead in technical security.

Follow the link for the details, and, remember, persons who don’t know how stuff works should not be put in charge of working that stuff.

Artificial? Yes. Intelligent? Not So Much. 0

Capable of making stuff up out of thin air? You betcha!

Artificial? Yes. Intelligent? Not So Much. 0

Telling you what you want to hear? Just maybe.

At Psychology Today Blogs, John Nosta argues that AI bots are being engineered to tell us what they their algorithms “think” we want to hear. A snippet:

Artificial? Yes. Intelligent? Not So Much. 0

Chum for honeypots? Gotcha, sucker.

Artificial? Yes. Intelligent? Not So Much. 0

- J. Pat O’Malley, in the voice of Pithias: Up until yesterday, he resided at a place of felonious intent. A state prison, I believe they call it.

The closed caption: Up until yesterday, he resided at a place of Thelonious and Tent.

The most artificial thing about artificial intelligence is the hype that it’s in some way intelligent. It’s an algorithm, and that’s all it is. And Thelonious and Tent are just two streets that intersect somewhere in the algorithm’s hallucination.

Artificial? Yes. Intelligent? Not So Much. 0

I must venture that this is a bad look for AI.

-

The dialog: Talking of money, the old filthy lucre.

The closed caption: Talking of money, the old filthy looker.

The stupid: Real, not artificial.

Afterthought:

I must admit, I have known some filthy lookers in my time, but that’s beside the point.

Artificial? Yes. Intelligent? Not So Much. 0

Sexist as all get-out? You can bet your subscription to Hustler on it.

Artificial? Yes. Intelligent? Not So Much. 0

Bait for the marks? You bet your sweet bippy.

Artificial? Yes. Intelligent? Not So Much. 0

Able to solve complex math problems? I’m sorry, but that just doesn’t add up.

All the World’s a Stage 0

And all the men and women merely players (whether they want to be or not).

One more time, “social” media isn’t.

Artificial? Yes. Intelligent? Not So Much. 0

Bait for the easily bamboozled? Fritz Kessler counsels caution.

Signal Achievements, Reprise 0

Security expert Bruce Schneier takes a deep dive in signalgate and its implications. In the light of recent events, the whole thing is worth a read. Here’s a tiny little bit.

Artificial? Yes. Intelligent? Not So Much. 0

Via Der Spiegel, a very sad story that illustrates why maybe, just maybe, AI robots should talk like robots, as Bruce Schneir recently suggested.

Artificial? Yes. Intelligent? Not So Much. 0

Susceptible to a misdirection play? Perha–Look! Over there!

Artificial? Yes. Intelligent? Not So Much. 0

Capable of perjury? You can swear on it.