Give Me a Break category archive

The Wedding Industrial Complex 0

This couple sold tickets to bride.

I suspect I’m not the only person who thinks the wedding industrial complex is out of control.

Dis Coarse Discourse 0

That some students are demonstrating in favor a cease fire in Gaza and against the killing there does not ipso facto mean that they support Hamas.

It means they want the bloodshed to end.

But, honest to Betsy, you sure as heck wouldn’t know it from a lot of the punditry about the protests.

I would like the killing to end, and I sure as heck don’t support Hamas; after all, they started (this round of) it.

Deceptive by Design 0

At Psychology Today Blogs, Penn State professor Patrick L. Plaisance looks at the hazards of designing Chatbots and similar “AI” mechanisms (after, that’s what they are: mechanisms) to interact with users (i. e., people) as if said mechanisms were people. For example, he mentions programming them so that they appear to be typing or speaking a response at a human-like speed when, in actuality, they formed their complete response in nano-seconds.

He makes three main points; follow the link for a detailed discussion of each.

- Anthropomorphic design can be useful, but unethical when it leads us to think the tool is something it’s not.

- Chatbot design can exploit our “heuristic processing,” inviting us to wrongly assign moral responsibility.

- Dishonest human-like features compound the problems of chatbot misinformation and discrimination.

Suffer the Children 0

Shorter Nebraska Governor Jim Pillen: Let them eat cake.

Facebook Frolics 0

Oh, Deere. Something posted on Facebook was of–er–questionable accuracy.

One more time, “social” media isn’t.

The Fee Hand of the Market 0

At the Inky, Harold Brubaker takes a look at hospital fees for various services that have been recently made available under a new federal regulation strongly opposed by hospitals and insurers. He concludes that they make no sense when exposed to the light. A snippet; follow the link for more.

Those are the prices consumers with high-deductible plans would have to pay to scan their knee and find out how serious the source of their pain is.

And replacing that knee would cost from $12,300 to more than $44,000 under insurance plans that IBC sells to employers and individuals.

The notion, often promoted by persons who call themselves “conservative,” that someone who is sick will comparison-shop for health care has always been fanciful. The reality is that, if there is a choice, a patient will go where his or her doctor says, and, in rural areas, there is often little or no choice from the git-go. Add in a landscape of wildly variable and irrational pricing schemes, comparison shopping for health care becomes an impossible dream all-too-possible nightmare.

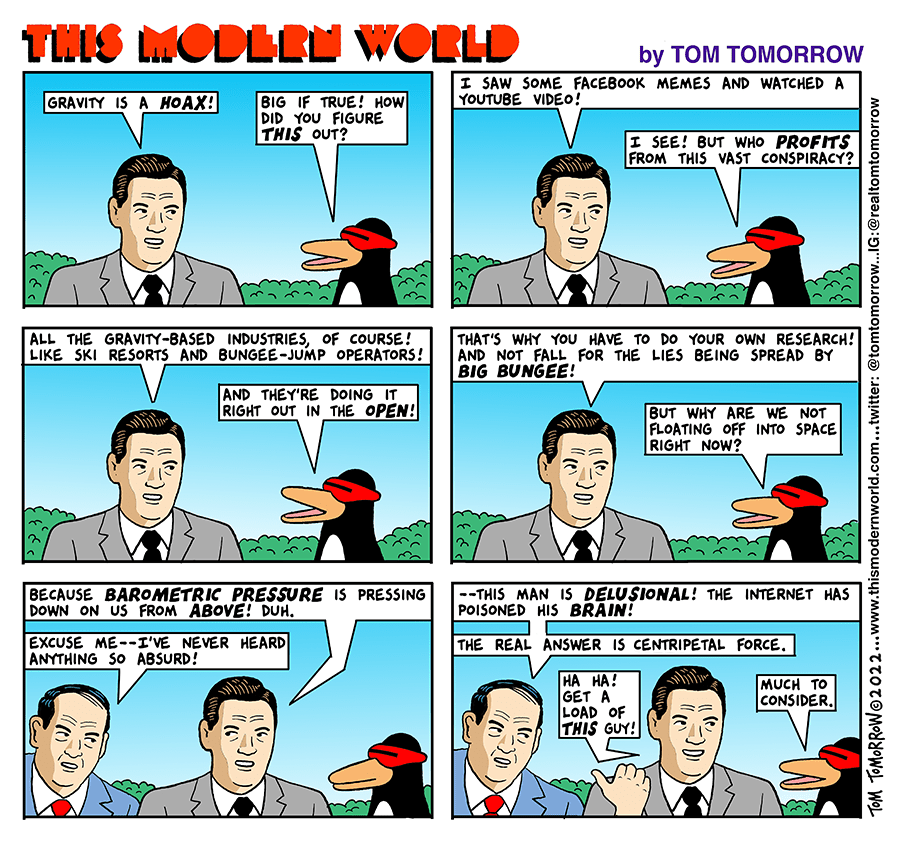

The Misinformation Superhighway 0

After drawing a distinction between misinformation and disinformation, Aditi Subramaniam offers some reasons as to why we are susceptible to misinformation (think the clickbait headlines that Snopes is so fond of debunking) and some techniques for dealing with it.

She starts by telling a story of her own trip down the rabbit hole of a clickbait headline (follow the link below to see what she discovered about said headline and its tenuous connection to facts, as well as for some hints to help avoid falling down your own rabbit holes). Here’s a bit of her article.